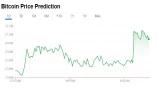

Bitcoin Fees Rise to $5 as Network Usage Reaches All Time High

Bitcoin fees have risen this month by 10x from 50 cent to now just above five dollars with bitcoin miners receiving around $1.5 million a day from transaction fees.

That’s the highest it has been in nearly a year since June 2019, but considerably lower than the $50 it reached during the peak of prices in December 2017.

As we can see above fees were a bit higher even in June 2017, and quite a bit higher in August 2017, and then they baloon.

That’s despite the price being quite a bit lower both in June and August 2017, with this difference explained by the fact back then there was less capacity.

That capacity was increased with the soft-fork activation of Segregated Witnesses (segwit) on the 24th of August 2017, less than a month after the Bitcoin Cash (BCH) chain-split on August first of that year.

That segwit activation increased bitcoin’s capacity, with it now reaching an all time high of a daily average of just above 1.3 MB of data per block, and a weekly average of just below 1.3MB.

This is the highest amount of data processing of any blockchain in history, with many now closely watching to see just how much higher it will go.

As you may know segwit implements an ‘accounting’ system that differentiates between raw data and signature data.

Signature, as in real life, is the digital proof of ownership. You have the public and private key, and there’s digital calculations in that ‘mating’ of the two which here is discounted by 75% compared to the other information of say how much bitcoin you are sending.

A lot of work has gone into compressing both signature and raw data, to effectively allow for more transactive acts in the same data space.

That means in the live environment we don’t actually know what bitcoin’s current maximum capacity is, with it theoretically having a limit of 4MB.

That’s very theoretical, however, because it can only be reached if all the data is signature data, as in just proof of ownership.

You obviously need other data, like to and fro, but most of the data is the proof of ownership and other cryptography information to the point for example you can have a transaction for just 20 bytes if the circa 250 byte of all the other information is already contained, with any other information required on top for a new transaction so being less than 10%.

If you add the Lightning Network (LN) you then get even more compression, with LN currently still a bit raw and not much used, but for frequent transactions theoretically there isn’t a relevant limit in the current context of blockchain capacity.

As it stands we can’t currently see easily the LN part of the transaction layer and what activity is happening there, but what we can see is that the on-chain data processing is increasing, and thus demand for the bitcoin network is rising, and the bitcoin network is able to accommodate it because the megabytes are increasing per block.

It can rise onchain up to a variable rate because we have not seen the actual limit, with that rate variable as it depends on how one in their end compresses or combines the different methods to fit more transactions within the same or less data requirements, but the maximum is unlikely to be more than 2.7MB per block.

Meaning bitcoin may have available twice the currently used capacity, raising the question of why then have fees jumped.

Bitcoin is developed by volunteer coders who receive no direct pay and are under no managerial orders or enforceable aims and targets.

It’s a work of ‘love’ and that translates to the coders not necessarily caring about your convenience or your lack of knowledge or your lazines.

As such, you need to have a mini university masters to figure out the plumbing with the coders usually fairly informative because they do present at conferences and so provide a general idea of just what they have designed in their very specialized hobby or work on protocol development.

What is known for long, relatively speaking, is that segwit is opt in. So if you run a node or bitcoin related processing system you can choose to adopt or not adopt segwit.

We’re obviously dealing here with a bearer asset and an irreversible one so security has to be at the level of critical infrastructure. Meaning it may take some time for an exchange handling billions to go through the whole process of safely taking advantage of new features.

Thus bitcoin’s capacity is a moving thing as it is bigger the more entities take advantage of the optional added capacity which accounts data differently than the fixed 1MB limit that there used to be.

Then you have potential compression methods within the upgraded bitcoin which likewise needs some mini PhD of its own, and again in critical infrastructure these things do take their time.

Therefore practically bitcoin is currently running at its limit – hence the increased fees – but not at the available limit which has to be ‘unlocked’ by clusters engaging in the necessary work to take advantage of the available capacity.

This surface complexity nonetheless has ended up giving bitcoin the highest utilized capacity in any current blockchain.

There are of course blockchains which can handle far higher capacity, but have a far lower utilization of it.

There are also blockchains, like ethereum in particular, which have close to the same utilization levels, but lower capacity.

Ethereum currently runs at the equivalent of 1MB every ten minutes, with fees lower there at about 10 cent, but a little spur and there too they’d reach five for a simple transaction, and a lot more for a smart contract transaction which has almost always been at around 50 cent, but depends on the complexity of the smart contract transaction, so sometime even when it might appear there is plenty of capacity, a smart contract transaction there can cost $2 or more.

That’s because ethereum has this gas measure, rather than bytes, an abstract unit of measurement related to the number of actions a transaction has to take, with that abstract gas unit of measurement still subject to or translatable in bytes which amounts to total actions in eth can’t currently exceed about 1MB per every ten minutes.

We can see above ethereum has been at full capacity for more than two years and unlike in bitcoin this isn’t variable in as far as entities can’t tap into available capacity.

Instead ethereum miners have to decide to increase capacity or otherwise with miners deciding to increase it by only 200kb per ten minutes in the past three years.

Ethereum of course has plans to increase capacity as does bitcoin and everyone else including new projects that are working very hard to crack this crucial aspect of global blockchain networks to facilitate more value transfers or world computer actions while still allowing the storage of data in anyone’s laptop to facilitate the decentralized nature of the network to the point even this more pen than code writer could chain split if wanted.

Yet the future is to be judged when it arrives. In the present, it is perhaps comforting to some that the whole scalability debate did lead to more capacity for bitcoin and more available capacity than any other utilized blockchain, and even led to the highest data processing by any blockchain network since their invention.

Copyrights Trustnodes.com

Article comments